6 Pillars of LMS Scalability

Words by

Kaine Shutler

TL;DR

Key takeaways

Scalability isn't just a technical problem. Infrastructure is one of six dimensions where growth either works or it doesn't — and it's rarely the most important one.

The costs that hurt most don't appear on any invoice. Missed deals, features you couldn't build, clients who left for a competitor with a better product — these show up in slower growth, not on a P&L.

Every migration is a reset. The training companies that scale without major disruption tend to have built something they never had to move away from.

Most training companies don't think seriously about scalability until they're already in trouble. A big client pushes them to onboard five hundred learners in a week. A competitor launches something they can't replicate. A platform they've built the business on starts falling apart under the weight of their own growth.

By that point, the options are narrower than they should be and the decisions are more expensive.

We work with training companies at various stages of growth, and the pattern is consistent. The businesses that scale without major disruption tend to have made better platform decisions earlier, ones that accounted for what the business might need to do in two or three years, not just what it needed to do that month.

Scalability gets talked about mostly in technical terms. Server capacity. Concurrent users. Load times. These things matter, but they're only part of it. A platform can handle a million users and still be the thing that stops the business from growing, because infrastructure is just one of the dimensions where scale either works or it doesn't.

We think there are six. Here's how we look at each of them.

1. Infrastructure

When people talk about LMS infrastructure, they usually mean servers. That's a narrow definition - infrastructure is really everything a learner touches, which means the problem starts much earlier than most people assume.

Think about what happens before someone logs in for the first time. They read about your training, decide they want it, and click through to buy. If the checkout page feels like it belongs to a different company, something breaks in that moment. Not technically, but in terms of trust. Then they land in the LMS and it looks like a third company. By the time they actually start learning, the product has already undermined their confidence in it.

Most training companies think of this as a branding problem. It isn't. It's an infrastructure problem, because it's structural. It's what happens when a product is built in pieces rather than designed as a whole.

The same thing happens inside the platform. Many training products are really five or six tools held loosely together. The LMS is here. The community is somewhere else. Assessments live in a third place. Surveys go out through a fourth. Each requires a different login, has a different interface, follows different logic. Learners don't articulate this as a problem. They just find the product confusing and effortful, and gradually engage with it less. You see it in completion rates. You see it in renewals. You rarely see it in feedback, because nobody writes in to say the experience felt fragmented.

Then there's the technical layer, which is where most people start this conversation.

Most platform decisions are made to solve the problem in front of you at the time. The cheapest option that works. The fastest to launch. The one your developer already knows. These are reasonable decisions under the constraints that existed when you made them. The problem is that the constraints change as the business grows, and the platform doesn't change with them. What worked at two hundred learners starts to show strain at two thousand. What worked at two thousand becomes the thing holding you back at twenty thousand.

A platform that handles five hundred learners comfortably can behave very differently with five thousand. When that happens, the instinct is to find a workaround rather than fix the underlying problem. License another tool to fill the gap. Build a manual process around the limitation. Each workaround adds complexity and cost without moving the ceiling.

The companies that handle this well don't wait until the current platform is failing to think about what comes next. They start that conversation while things are still working, which means they have the time and the headroom to make good decisions rather than urgent ones. A migration planned over six months looks completely different to one that has to happen in six weeks because the system fell over.

When that conversation happens early enough, the solution is usually more straightforward than people expect. Design the ecosystem as a whole rather than assembling it in pieces. Single sign-on across every product. A consistent experience from the first marketing touchpoint through to the last lesson. A technical foundation chosen for where the business is going, not where it is today. And critically, architecture that doesn't lock you in, because the decisions you make at the start should expand your options over time, not reduce them.

2. Workflow efficiency

Infrastructure failure is visible. Workflow failure tends to become invisible, because the manual workarounds people put in place eventually just become the job. Nobody questions why they spend every Monday processing a spreadsheet of new starters. It's just Monday.

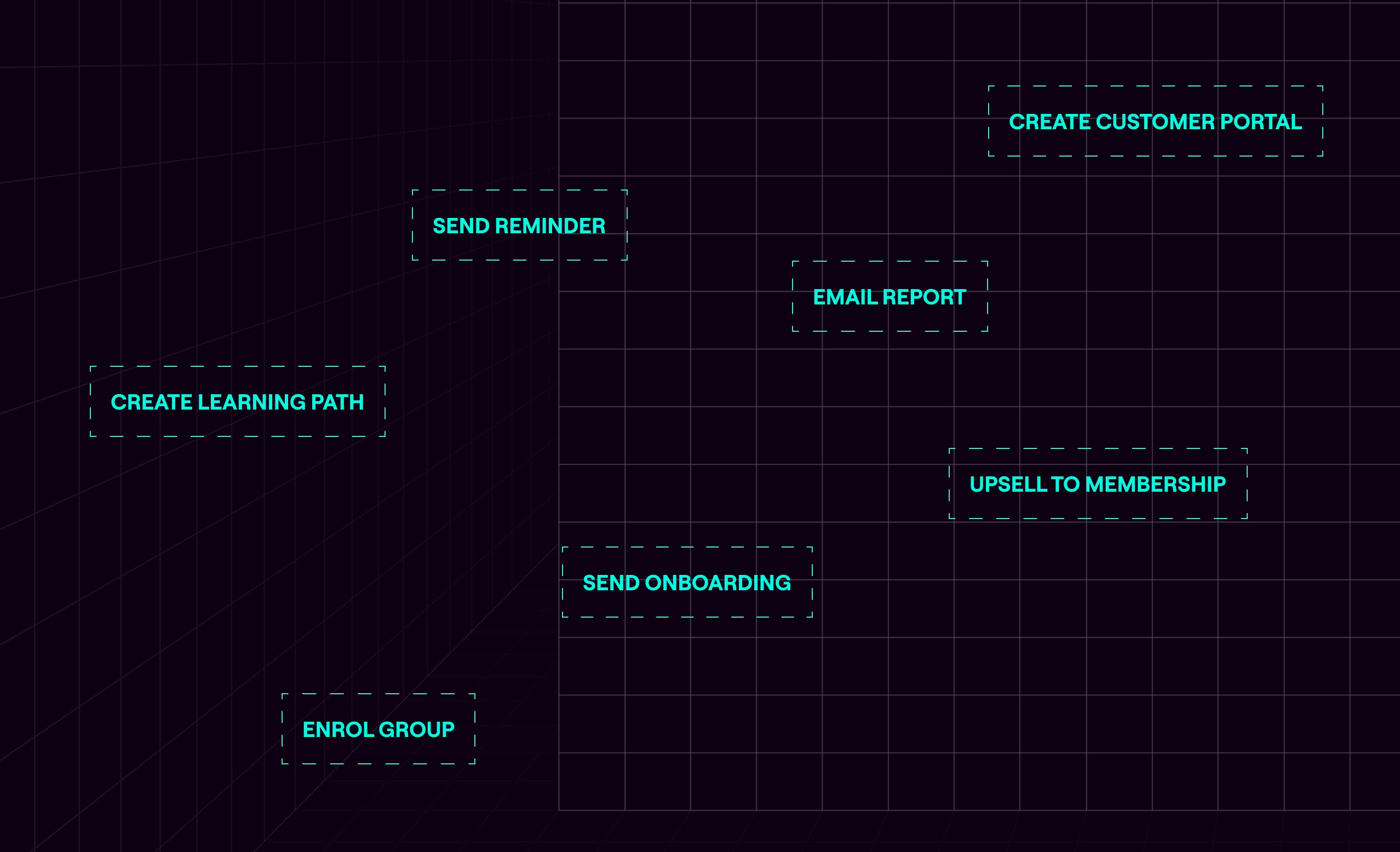

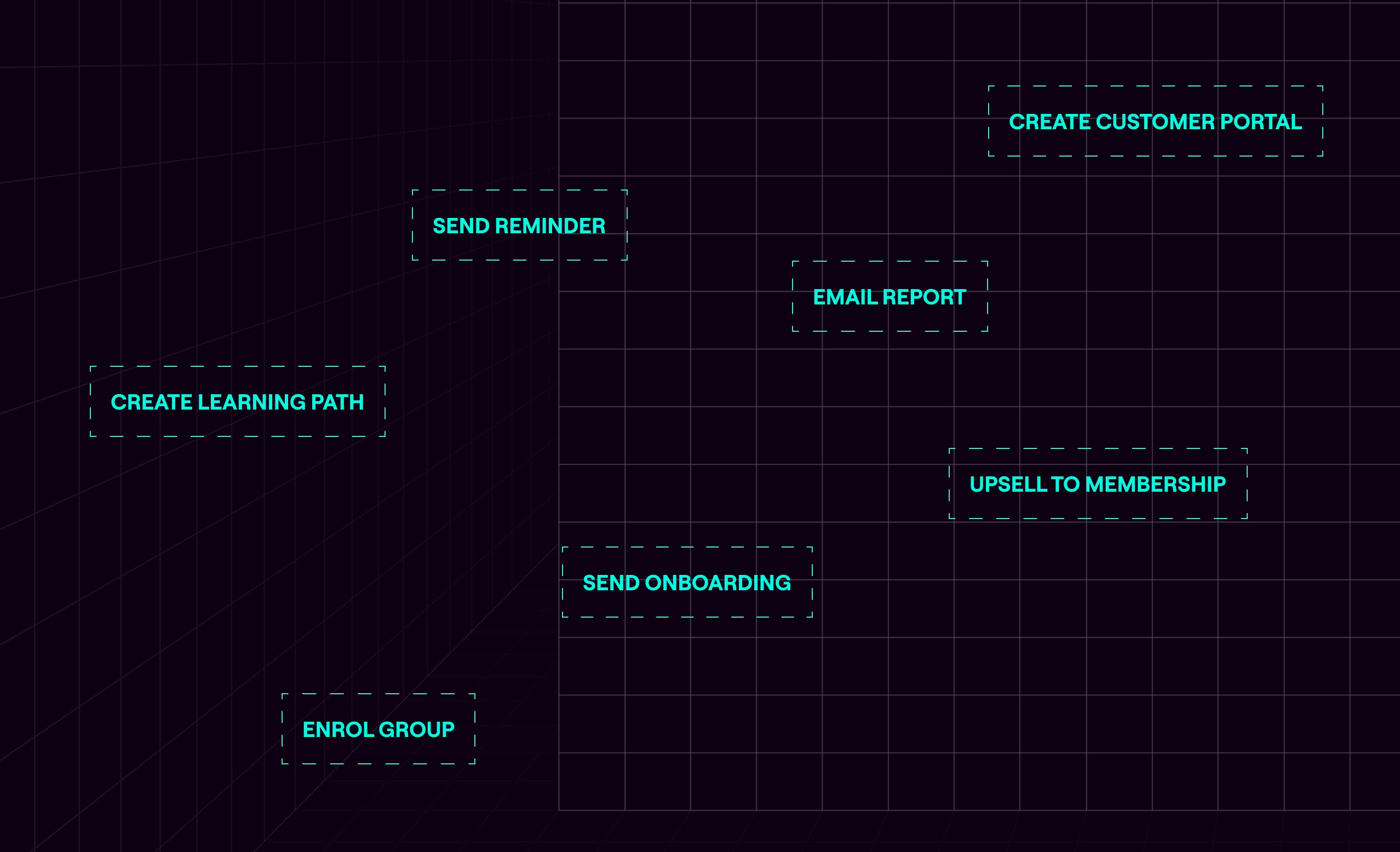

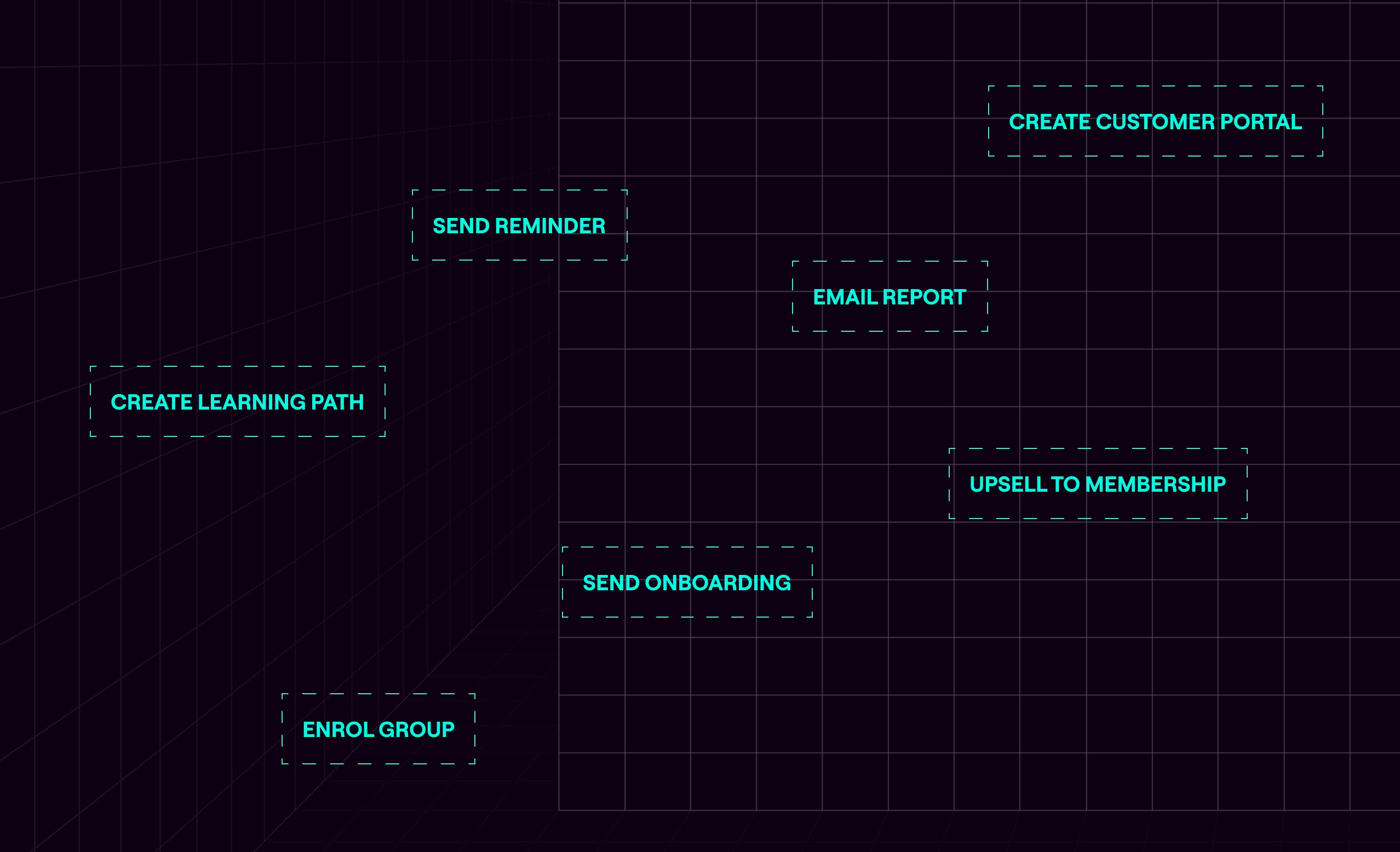

It usually starts with one person whose job involves a lot of manual tasks that should be automated. Watching for purchase notifications and manually creating user accounts. Enrolling learners. Compiling monthly reports for B2B clients by pulling data from the LMS and building spreadsheets by hand. Managing requests that pile up in a support inbox.

At a hundred users, this is manageable. At a thousand, you hire someone to help. At ten thousand, you have a team, and the team is the bottleneck.

The goal isn't to remove people from the business. It's to make sure people are doing work that requires human judgement, not work that a well-configured system should be handling automatically.

Take new cohort onboarding. A client emails a spreadsheet of thirty new starters. Someone on your team processes it, creates the accounts, assigns the learning paths, and sends the welcome emails. Multiply that by every client, every month, and it becomes a significant drain on time that could be spent elsewhere. The better model is giving clients a self-serve import tool, or better still, an integration with their HR system that handles new starters automatically the moment they're added. The client doesn't wait. Your team doesn't intervene. It just happens.

The same applies to engagement. The most common mistake after companies start surfacing engagement data is routing it to a human and expecting them to act on it. When a learner isn't engaging, notifying their manager shouldn't be the end of the workflow. When you ask a manager to review a report and chase up a learner, you're adding workload and friction. You're also giving them the option not to bother. The system should be doing everything it reasonably can to keep learners engaged and progressing without human intervention. Reminders. Course recommendations. Re-enrolments. Nudges at the right moment in the right format. Every time a human has to intervene to keep a learner on track, you've introduced a step that may or may not happen.

Certifications expire. Someone is usually responsible for tracking who's due, chasing them, re-enrolling them, and updating their record when they complete. At scale it becomes a compliance risk, because the process depends on someone remembering to do it and then doing it without error. The whole thing should run automatically: expiry triggers a re-enrolment, a reminder sequence fires, and the record updates on completion without anyone touching it.

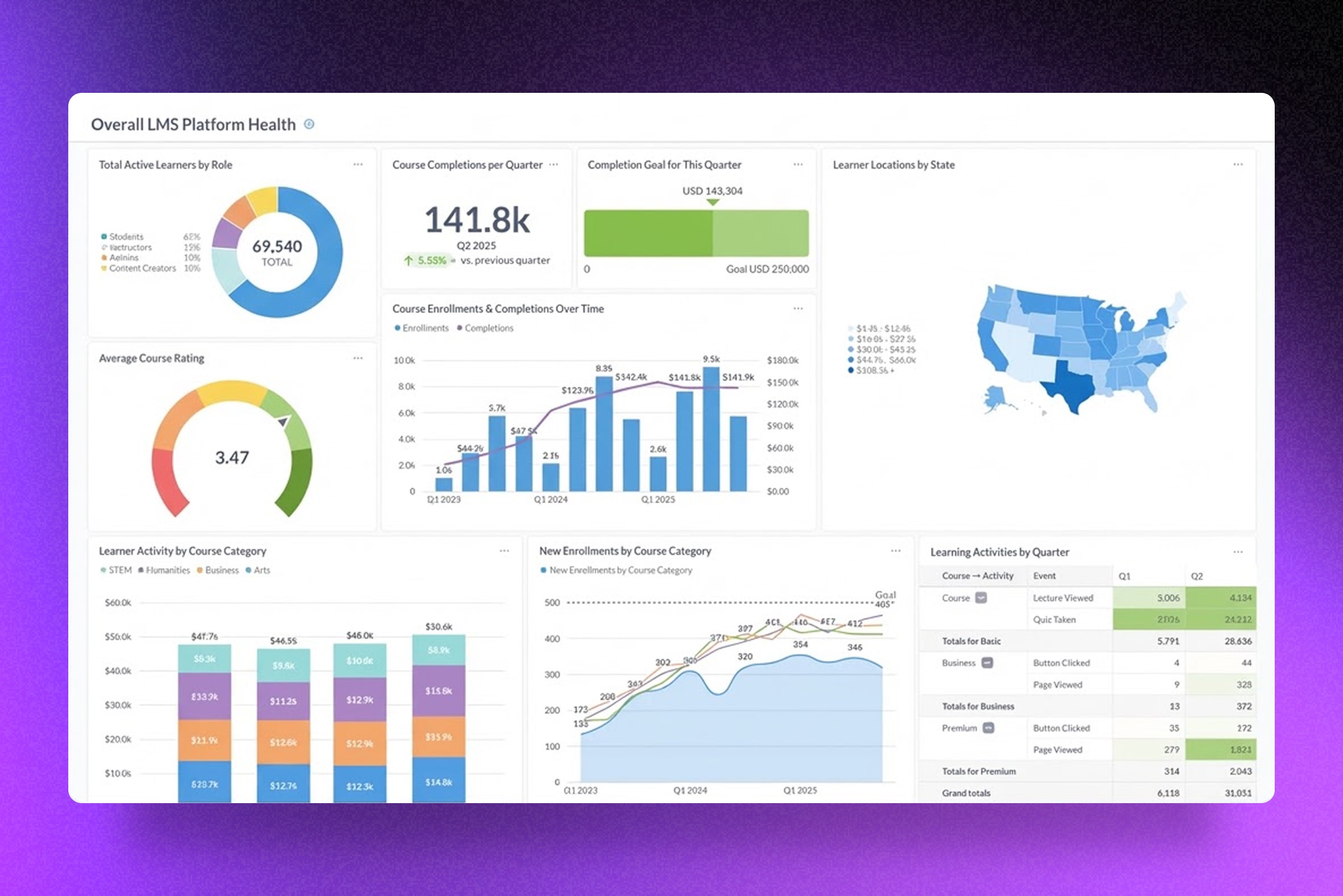

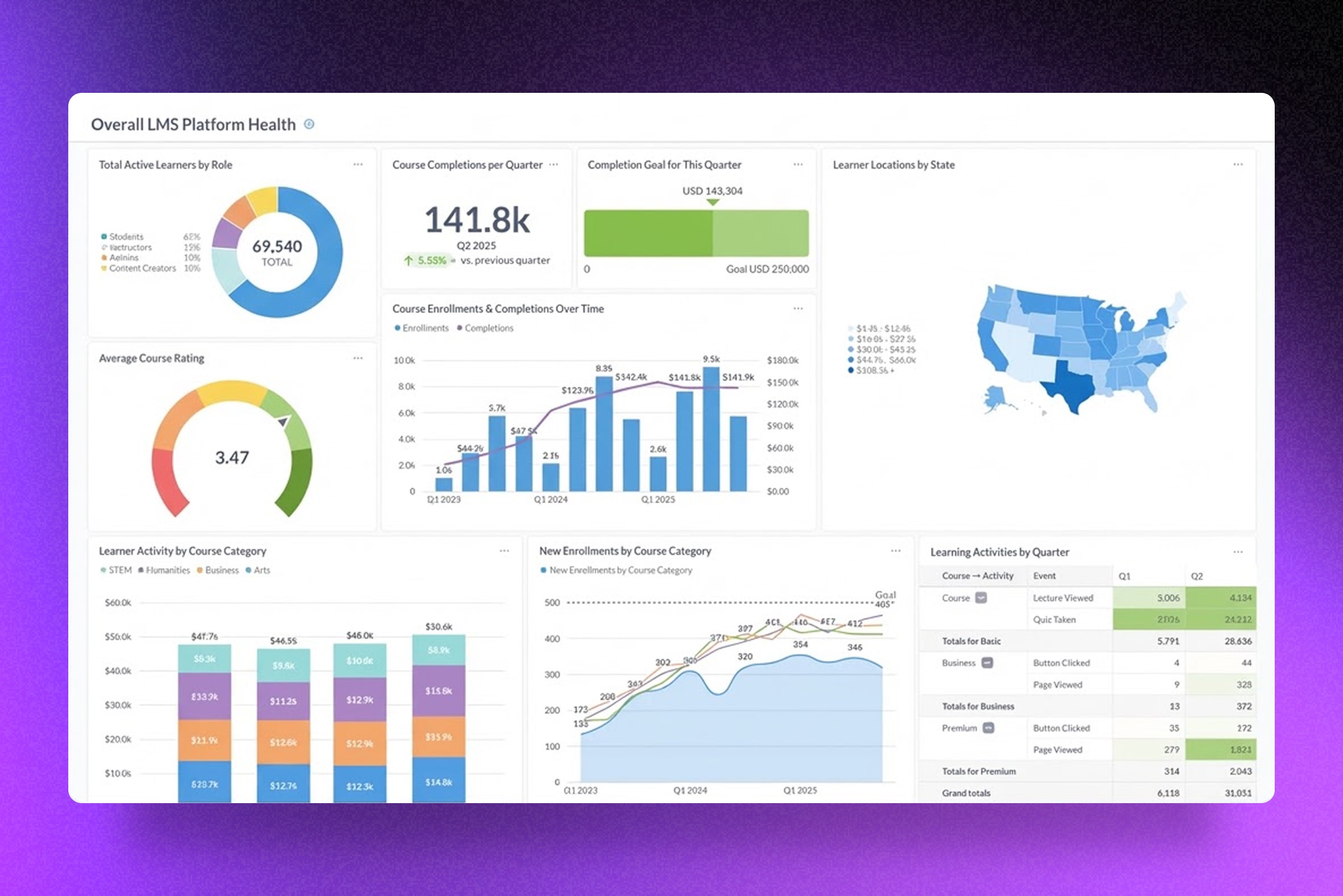

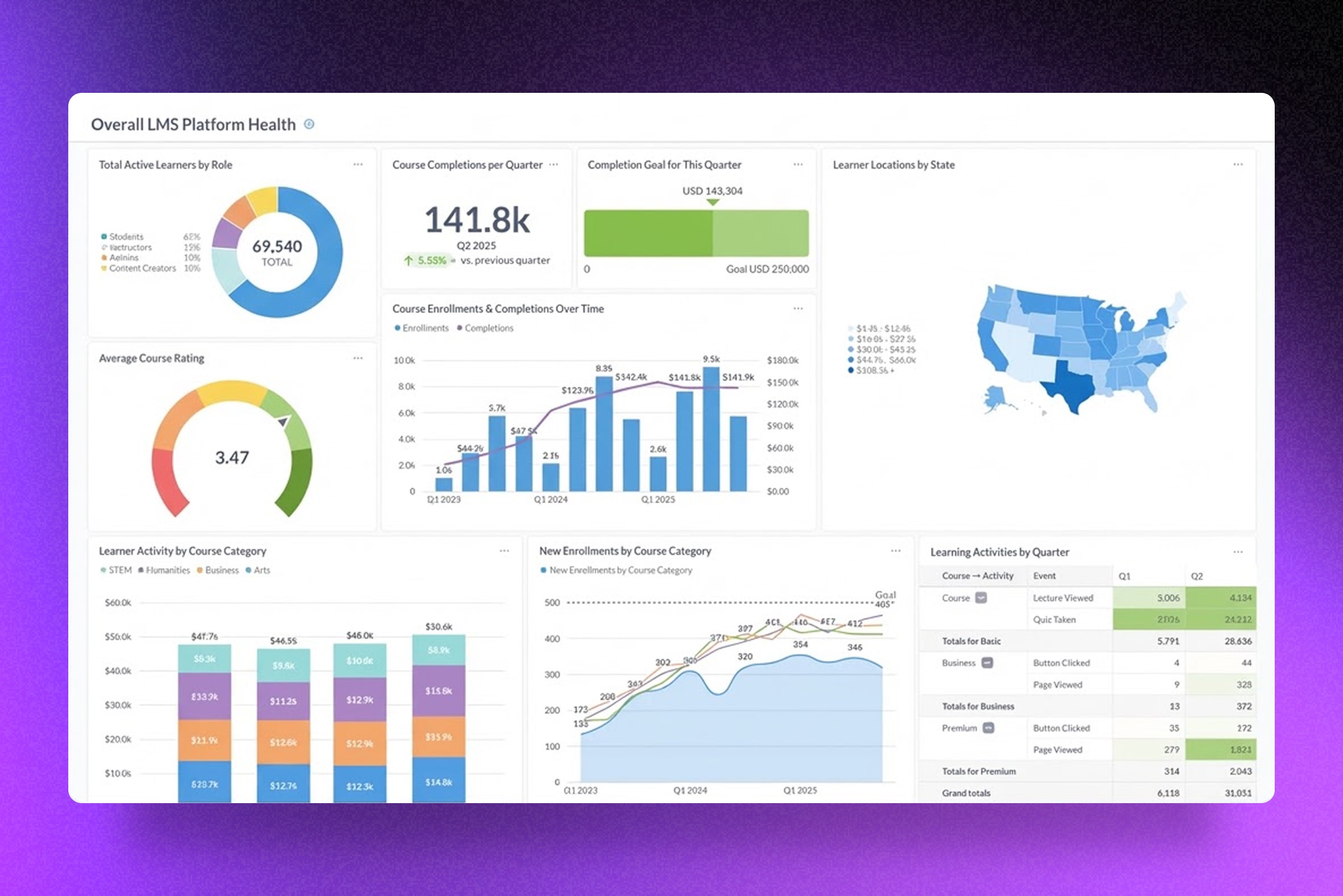

For reporting, the tools baked into most LMSs were built for a generic business; not yours. They surface raw data and leave you to interpret it. B2B training companies need to present that data in a way that demonstrates value to their clients. Which learners have completed which modules. Which clients are at compliance risk. Where engagement is dropping off. The companies that handle this well build reporting their clients can log into and use themselves. A live view of the information that matters, available without anyone having to produce it.

And if your B2B clients contact you every time they need to add a new starter or remove a leaver, that's friction for them and an administrative burden for you. Give clients the ability to manage their own users and both problems go away.

These are the most visible examples, but the principle runs through everything. Role changes that should trigger a new learning path but don't, because nobody told the LMS. Failed assessments that sit unaddressed because there's no automated follow-up. Each of these is a place where the system is asking a human to do something a system should do, and each one compounds as the business grows.

3. Cost

The cost conversation in LMS decisions almost always starts with the wrong number. The monthly fee, or the per-user rate. These are visible and easy to compare. What's harder to see is what the cost structure looks like at five times your current scale.

Per-user SaaS pricing sounds reasonable until the numbers get large. At $5 per user per month, 500 learners cost $30,000 a year. Add a 10,000-learner enterprise client and the same pricing model costs $600,000. You can pass that cost on, but now you're more expensive than a competitor who doesn't carry it. You can absorb it, but your margins take the hit.

And this is before accounting for price rises that have nothing to do with your growth. SaaS pricing across the industry rose by an average of 11.4 percent in 2025. But even without price rises, SaaS models are designed to cost more as you grow. Per-user pricing is the most visible version of that, but unlimited plans have their own version — features locked behind higher tiers, API limits that become relevant at scale, storage caps, support that costs extra when you actually need it. There's always something.

Nobody factors migration into the original platform decision. They should. Moving from one system to another takes months of internal time, costs money to execute, and disrupts clients in the process. When it goes wrong — data that doesn't transfer cleanly, learners who lose access, integrations that break — clients leave. And if you're on the wrong platform, you'll go through this more than once. Each time you outgrow a SaaS and move to the next one, the clock resets. When you build something designed to scale with the business, you move once.

There are also costs that never show up anywhere obvious. If your platform lacks features or integrations, you hire people to compensate. Extra support staff because the platform can't handle self-serve. Operations people because enrolments are manual. Account managers because reporting has to be compiled by hand. These show up in the headcount but the root cause is the technology, and the connection rarely gets made.

Worth saying plainly: custom platforms aren't without cost. Development is an investment, not a purchase, and maintenance is an ongoing expense. But the cost structure is predictable and doesn't change when your vendor gets acquired or decides to reprice. Moving from 1,000 learners to 10,000 increases server load modestly. Fees stay flat, for the most part.

The numbers over five years tell the story. A SaaS platform supporting ten thousand users commonly reaches a total cost of around $1.6m when you factor in per-user fees, price rises, and the personnel needed to work around its limitations. A custom platform with predictable hosting and maintenance tends to land around $250k over the same period. The crossover usually happens by year two or three.

Calculate the cost of SaaS versus custom using this free pricing calculator

Hardest to quantify is the cost you can't see at all. An idea your platform couldn't support, so you never built it. A feature your competitor launched that you couldn't match, so some clients left. A deal you priced yourself out of because your costs made you uncompetitive. None of these appear on a P&L. You just notice, eventually, that growth is slower than it should be.

4. Data

Most training companies are sitting on learner data they can't use. Not because it doesn't exist, but because nobody has built the systems to surface it in a way that actually informs decisions.

When you have fifty learners, you notice things. You can tell who's struggling, which content isn't landing, which clients are going quiet. When you have fifty thousand, you can't notice anything without systems that do it for you. Data is what makes a large learner base manageable rather than opaque. Without it, you're making product and retention decisions at scale based on instinct.

The questions most platforms let you answer are the obvious ones. How many people finished the course. How many logged in this week. These are fine as far as they go, but the more useful questions are specific to how your business operates and what success actually looks like for you. Which content causes people to drop off. Which learning paths correlate with better outcomes three months later. Which clients are quietly disengaging before they pick up the phone to cancel. Generic platforms can't answer these because they weren't built with your definition of success in mind.

For B2B training companies this is also a retention argument. Keeping clients depends on being able to show them the value they're getting. A spreadsheet of completion rates doesn't do that. Reporting that shows compliance status across their organisation, identifies learners at risk, and connects training activity to outcomes they actually care about - that's something worth renewing a contract for.

5. Agility

Agility is the ability to move when something changes outside your control. A competitor launches a feature your clients are asking about. A regulation comes into effect. A prospective client has specific technical demands your current platform can't meet.

The failure state isn't dramatic. You don't lose everything at once. You start losing deals you would previously have won. Churn creeps up because a competitor's product looks fresher. Your team starts saying "we can't do that" more often than they say "we can."

Regulatory compliance is where deals start to disappear before they're even properly lost. Data residency is the most common example. Many enterprise clients, governments, and public sector organisations require learner data to be stored and processed in their country. If your platform can't accommodate that, the deal is over before it started. HIPAA is a more acute version of the same problem. There are very few genuinely HIPAA-compliant LMSs, and the ones that are tend to have built compliance in at the expense of everything else. Healthcare organisations that want a modern, engaging learning experience and HIPAA compliance usually find they can't get both from an off-the-shelf platform.

Agility also determines how meaningfully you can differentiate through user experience. Most training platforms offer broadly the same thing: video lessons, quizzes, a certificate at the end. When your platform gives you the freedom to build beyond that, more interactive content, personalised learning paths, a genuinely better interface, you're offering something your competitors can't easily replicate. Better experiences produce better completion rates. Better outcomes justify higher fees. Clients who get real value from the training renew. All of this compounds over time in ways that show up in the numbers.

The companies that stay agile tend to have made one decision well early on: they kept control of the platform. When the business needed to change direction, the technology could follow.

6. Expansion

Where agility is reactive, expansion is deliberate. Choosing to enter new territory, a new market, a new type of customer, a new commercial model, and needing the platform to support that move.

Most training companies start with one product, one audience, one geography. That's often the right way to start but a business that's still selling in only one way to only one type of customer is more fragile than it looks. Each successful expansion reduces that dependency risk. A company that also sells B2B, operates in multiple geographies, and white-labels to partners isn't just bigger , but is more resilient. That's a different kind of scalability. Not just more users, but a more durable business.

The most common version we see is training companies moving into the enterprise market. Larger contracts, longer relationships, more predictable revenue. It's often where the real growth is. But selling to businesses rather than individuals changes what the platform needs to do significantly. You need multi-tenancy, the ability to give each client their own environment with their own users, branding, and reporting. You need seat management tools your clients can use themselves. You need compliance tracking at an organisational level, not just an individual one. None of this is exotic, but it requires a platform that can be shaped around a new commercial model rather than one that locks you into the model it was designed for.

Geographical expansion introduces a different set of demands. Data residency requirements that vary by country. Payment processors that work in the markets you're entering. Mobile-first design for markets where most learners are on a phone rather than a desktop. Each new market has its own version of this list.

White-labelling is another common expansion path, offering your platform to other businesses under their brand, as a product rather than a service. We've built this for several clients who serve different industry verticals under different brands. Each one looks and feels entirely distinct but runs on shared infrastructure, which makes the economics of maintaining multiple products considerably more manageable.

The common thread is that expansion requires the platform to do something it wasn't originally designed to do. When it can't, the only option is to migrate to one that can. And migrations are expensive, disruptive, and time-consuming in ways that always get underestimated. Data to transfer. Integrations to rebuild. Clients to manage through the disruption. The business effectively pauses while it moves house, and some clients won't wait. Every migration is a reset, months of work to get back to where you were, before you can move forward again.

When the platform can support it, expansion compounds. The infrastructure you built to serve enterprise clients also supports white-labelling. The multi-tenancy you developed for one vertical works for the next. Each move builds on the last rather than requiring a rebuild from scratch.

These pillars don't exist in isolation

They interact in ways that aren't always obvious until something goes wrong. A data problem limits your agility because you can't move quickly without knowing where the issues are. An infrastructure problem creates a cost problem because you end up spending on maintenance and headcount that better early decisions would have avoided. A workflow problem slows expansion because your team can't onboard new clients at speed if every enrolment is manual.

The companies that scale make platform decisions that account for all six, not necessarily all at once, but with a clear sense of where they're heading.

The ones that struggle tend to fix whatever is most visibly broken without asking what caused it. They bump up the server. They hire another operations person. They switch platforms. Six months later, a different symptom of the same problem appears.

If any of this resonates - if you're turning down deals, losing clients, or making decisions based on what your platform allows rather than what your business needs - it's usually worth looking at the full picture rather than the loudest problem.

That's what our Blueprint process is for. A series of workshops where we work through your current situation, your growth goals, and the gap between them, and produce a plan for a platform that can support all of it. Not only what you need today, but what you'll need in three to five years. Learn more here.

If you'd like to start that conversation, get in touch.

Kaine Shutler is the founder and managing director of Plume, a studio specialising in custom learning technology. With 14 years of experience, Kaine has established expertise in Learning Management Systems, UI/UX design, and scalability, working with clients including Google and training businesses across multiple sectors.

6 Pillars of LMS Scalability

Words by

Kaine Shutler

TL;DR

Key takeaways

Scalability isn't just a technical problem. Infrastructure is one of six dimensions where growth either works or it doesn't — and it's rarely the most important one.

The costs that hurt most don't appear on any invoice. Missed deals, features you couldn't build, clients who left for a competitor with a better product — these show up in slower growth, not on a P&L.

Every migration is a reset. The training companies that scale without major disruption tend to have built something they never had to move away from.

Most training companies don't think seriously about scalability until they're already in trouble. A big client pushes them to onboard five hundred learners in a week. A competitor launches something they can't replicate. A platform they've built the business on starts falling apart under the weight of their own growth.

By that point, the options are narrower than they should be and the decisions are more expensive.

We work with training companies at various stages of growth, and the pattern is consistent. The businesses that scale without major disruption tend to have made better platform decisions earlier, ones that accounted for what the business might need to do in two or three years, not just what it needed to do that month.

Scalability gets talked about mostly in technical terms. Server capacity. Concurrent users. Load times. These things matter, but they're only part of it. A platform can handle a million users and still be the thing that stops the business from growing, because infrastructure is just one of the dimensions where scale either works or it doesn't.

We think there are six. Here's how we look at each of them.

1. Infrastructure

When people talk about LMS infrastructure, they usually mean servers. That's a narrow definition - infrastructure is really everything a learner touches, which means the problem starts much earlier than most people assume.

Think about what happens before someone logs in for the first time. They read about your training, decide they want it, and click through to buy. If the checkout page feels like it belongs to a different company, something breaks in that moment. Not technically, but in terms of trust. Then they land in the LMS and it looks like a third company. By the time they actually start learning, the product has already undermined their confidence in it.

Most training companies think of this as a branding problem. It isn't. It's an infrastructure problem, because it's structural. It's what happens when a product is built in pieces rather than designed as a whole.

The same thing happens inside the platform. Many training products are really five or six tools held loosely together. The LMS is here. The community is somewhere else. Assessments live in a third place. Surveys go out through a fourth. Each requires a different login, has a different interface, follows different logic. Learners don't articulate this as a problem. They just find the product confusing and effortful, and gradually engage with it less. You see it in completion rates. You see it in renewals. You rarely see it in feedback, because nobody writes in to say the experience felt fragmented.

Then there's the technical layer, which is where most people start this conversation.

Most platform decisions are made to solve the problem in front of you at the time. The cheapest option that works. The fastest to launch. The one your developer already knows. These are reasonable decisions under the constraints that existed when you made them. The problem is that the constraints change as the business grows, and the platform doesn't change with them. What worked at two hundred learners starts to show strain at two thousand. What worked at two thousand becomes the thing holding you back at twenty thousand.

A platform that handles five hundred learners comfortably can behave very differently with five thousand. When that happens, the instinct is to find a workaround rather than fix the underlying problem. License another tool to fill the gap. Build a manual process around the limitation. Each workaround adds complexity and cost without moving the ceiling.

The companies that handle this well don't wait until the current platform is failing to think about what comes next. They start that conversation while things are still working, which means they have the time and the headroom to make good decisions rather than urgent ones. A migration planned over six months looks completely different to one that has to happen in six weeks because the system fell over.

When that conversation happens early enough, the solution is usually more straightforward than people expect. Design the ecosystem as a whole rather than assembling it in pieces. Single sign-on across every product. A consistent experience from the first marketing touchpoint through to the last lesson. A technical foundation chosen for where the business is going, not where it is today. And critically, architecture that doesn't lock you in, because the decisions you make at the start should expand your options over time, not reduce them.

2. Workflow efficiency

Infrastructure failure is visible. Workflow failure tends to become invisible, because the manual workarounds people put in place eventually just become the job. Nobody questions why they spend every Monday processing a spreadsheet of new starters. It's just Monday.

It usually starts with one person whose job involves a lot of manual tasks that should be automated. Watching for purchase notifications and manually creating user accounts. Enrolling learners. Compiling monthly reports for B2B clients by pulling data from the LMS and building spreadsheets by hand. Managing requests that pile up in a support inbox.

At a hundred users, this is manageable. At a thousand, you hire someone to help. At ten thousand, you have a team, and the team is the bottleneck.

The goal isn't to remove people from the business. It's to make sure people are doing work that requires human judgement, not work that a well-configured system should be handling automatically.

Take new cohort onboarding. A client emails a spreadsheet of thirty new starters. Someone on your team processes it, creates the accounts, assigns the learning paths, and sends the welcome emails. Multiply that by every client, every month, and it becomes a significant drain on time that could be spent elsewhere. The better model is giving clients a self-serve import tool, or better still, an integration with their HR system that handles new starters automatically the moment they're added. The client doesn't wait. Your team doesn't intervene. It just happens.

The same applies to engagement. The most common mistake after companies start surfacing engagement data is routing it to a human and expecting them to act on it. When a learner isn't engaging, notifying their manager shouldn't be the end of the workflow. When you ask a manager to review a report and chase up a learner, you're adding workload and friction. You're also giving them the option not to bother. The system should be doing everything it reasonably can to keep learners engaged and progressing without human intervention. Reminders. Course recommendations. Re-enrolments. Nudges at the right moment in the right format. Every time a human has to intervene to keep a learner on track, you've introduced a step that may or may not happen.

Certifications expire. Someone is usually responsible for tracking who's due, chasing them, re-enrolling them, and updating their record when they complete. At scale it becomes a compliance risk, because the process depends on someone remembering to do it and then doing it without error. The whole thing should run automatically: expiry triggers a re-enrolment, a reminder sequence fires, and the record updates on completion without anyone touching it.

For reporting, the tools baked into most LMSs were built for a generic business; not yours. They surface raw data and leave you to interpret it. B2B training companies need to present that data in a way that demonstrates value to their clients. Which learners have completed which modules. Which clients are at compliance risk. Where engagement is dropping off. The companies that handle this well build reporting their clients can log into and use themselves. A live view of the information that matters, available without anyone having to produce it.

And if your B2B clients contact you every time they need to add a new starter or remove a leaver, that's friction for them and an administrative burden for you. Give clients the ability to manage their own users and both problems go away.

These are the most visible examples, but the principle runs through everything. Role changes that should trigger a new learning path but don't, because nobody told the LMS. Failed assessments that sit unaddressed because there's no automated follow-up. Each of these is a place where the system is asking a human to do something a system should do, and each one compounds as the business grows.

3. Cost

The cost conversation in LMS decisions almost always starts with the wrong number. The monthly fee, or the per-user rate. These are visible and easy to compare. What's harder to see is what the cost structure looks like at five times your current scale.

Per-user SaaS pricing sounds reasonable until the numbers get large. At $5 per user per month, 500 learners cost $30,000 a year. Add a 10,000-learner enterprise client and the same pricing model costs $600,000. You can pass that cost on, but now you're more expensive than a competitor who doesn't carry it. You can absorb it, but your margins take the hit.

And this is before accounting for price rises that have nothing to do with your growth. SaaS pricing across the industry rose by an average of 11.4 percent in 2025. But even without price rises, SaaS models are designed to cost more as you grow. Per-user pricing is the most visible version of that, but unlimited plans have their own version — features locked behind higher tiers, API limits that become relevant at scale, storage caps, support that costs extra when you actually need it. There's always something.

Nobody factors migration into the original platform decision. They should. Moving from one system to another takes months of internal time, costs money to execute, and disrupts clients in the process. When it goes wrong — data that doesn't transfer cleanly, learners who lose access, integrations that break — clients leave. And if you're on the wrong platform, you'll go through this more than once. Each time you outgrow a SaaS and move to the next one, the clock resets. When you build something designed to scale with the business, you move once.

There are also costs that never show up anywhere obvious. If your platform lacks features or integrations, you hire people to compensate. Extra support staff because the platform can't handle self-serve. Operations people because enrolments are manual. Account managers because reporting has to be compiled by hand. These show up in the headcount but the root cause is the technology, and the connection rarely gets made.

Worth saying plainly: custom platforms aren't without cost. Development is an investment, not a purchase, and maintenance is an ongoing expense. But the cost structure is predictable and doesn't change when your vendor gets acquired or decides to reprice. Moving from 1,000 learners to 10,000 increases server load modestly. Fees stay flat, for the most part.

The numbers over five years tell the story. A SaaS platform supporting ten thousand users commonly reaches a total cost of around $1.6m when you factor in per-user fees, price rises, and the personnel needed to work around its limitations. A custom platform with predictable hosting and maintenance tends to land around $250k over the same period. The crossover usually happens by year two or three.

Calculate the cost of SaaS versus custom using this free pricing calculator

Hardest to quantify is the cost you can't see at all. An idea your platform couldn't support, so you never built it. A feature your competitor launched that you couldn't match, so some clients left. A deal you priced yourself out of because your costs made you uncompetitive. None of these appear on a P&L. You just notice, eventually, that growth is slower than it should be.

4. Data

Most training companies are sitting on learner data they can't use. Not because it doesn't exist, but because nobody has built the systems to surface it in a way that actually informs decisions.

When you have fifty learners, you notice things. You can tell who's struggling, which content isn't landing, which clients are going quiet. When you have fifty thousand, you can't notice anything without systems that do it for you. Data is what makes a large learner base manageable rather than opaque. Without it, you're making product and retention decisions at scale based on instinct.

The questions most platforms let you answer are the obvious ones. How many people finished the course. How many logged in this week. These are fine as far as they go, but the more useful questions are specific to how your business operates and what success actually looks like for you. Which content causes people to drop off. Which learning paths correlate with better outcomes three months later. Which clients are quietly disengaging before they pick up the phone to cancel. Generic platforms can't answer these because they weren't built with your definition of success in mind.

For B2B training companies this is also a retention argument. Keeping clients depends on being able to show them the value they're getting. A spreadsheet of completion rates doesn't do that. Reporting that shows compliance status across their organisation, identifies learners at risk, and connects training activity to outcomes they actually care about - that's something worth renewing a contract for.

5. Agility

Agility is the ability to move when something changes outside your control. A competitor launches a feature your clients are asking about. A regulation comes into effect. A prospective client has specific technical demands your current platform can't meet.

The failure state isn't dramatic. You don't lose everything at once. You start losing deals you would previously have won. Churn creeps up because a competitor's product looks fresher. Your team starts saying "we can't do that" more often than they say "we can."

Regulatory compliance is where deals start to disappear before they're even properly lost. Data residency is the most common example. Many enterprise clients, governments, and public sector organisations require learner data to be stored and processed in their country. If your platform can't accommodate that, the deal is over before it started. HIPAA is a more acute version of the same problem. There are very few genuinely HIPAA-compliant LMSs, and the ones that are tend to have built compliance in at the expense of everything else. Healthcare organisations that want a modern, engaging learning experience and HIPAA compliance usually find they can't get both from an off-the-shelf platform.

Agility also determines how meaningfully you can differentiate through user experience. Most training platforms offer broadly the same thing: video lessons, quizzes, a certificate at the end. When your platform gives you the freedom to build beyond that, more interactive content, personalised learning paths, a genuinely better interface, you're offering something your competitors can't easily replicate. Better experiences produce better completion rates. Better outcomes justify higher fees. Clients who get real value from the training renew. All of this compounds over time in ways that show up in the numbers.

The companies that stay agile tend to have made one decision well early on: they kept control of the platform. When the business needed to change direction, the technology could follow.

6. Expansion

Where agility is reactive, expansion is deliberate. Choosing to enter new territory, a new market, a new type of customer, a new commercial model, and needing the platform to support that move.

Most training companies start with one product, one audience, one geography. That's often the right way to start but a business that's still selling in only one way to only one type of customer is more fragile than it looks. Each successful expansion reduces that dependency risk. A company that also sells B2B, operates in multiple geographies, and white-labels to partners isn't just bigger , but is more resilient. That's a different kind of scalability. Not just more users, but a more durable business.

The most common version we see is training companies moving into the enterprise market. Larger contracts, longer relationships, more predictable revenue. It's often where the real growth is. But selling to businesses rather than individuals changes what the platform needs to do significantly. You need multi-tenancy, the ability to give each client their own environment with their own users, branding, and reporting. You need seat management tools your clients can use themselves. You need compliance tracking at an organisational level, not just an individual one. None of this is exotic, but it requires a platform that can be shaped around a new commercial model rather than one that locks you into the model it was designed for.

Geographical expansion introduces a different set of demands. Data residency requirements that vary by country. Payment processors that work in the markets you're entering. Mobile-first design for markets where most learners are on a phone rather than a desktop. Each new market has its own version of this list.

White-labelling is another common expansion path, offering your platform to other businesses under their brand, as a product rather than a service. We've built this for several clients who serve different industry verticals under different brands. Each one looks and feels entirely distinct but runs on shared infrastructure, which makes the economics of maintaining multiple products considerably more manageable.

The common thread is that expansion requires the platform to do something it wasn't originally designed to do. When it can't, the only option is to migrate to one that can. And migrations are expensive, disruptive, and time-consuming in ways that always get underestimated. Data to transfer. Integrations to rebuild. Clients to manage through the disruption. The business effectively pauses while it moves house, and some clients won't wait. Every migration is a reset, months of work to get back to where you were, before you can move forward again.

When the platform can support it, expansion compounds. The infrastructure you built to serve enterprise clients also supports white-labelling. The multi-tenancy you developed for one vertical works for the next. Each move builds on the last rather than requiring a rebuild from scratch.

These pillars don't exist in isolation

They interact in ways that aren't always obvious until something goes wrong. A data problem limits your agility because you can't move quickly without knowing where the issues are. An infrastructure problem creates a cost problem because you end up spending on maintenance and headcount that better early decisions would have avoided. A workflow problem slows expansion because your team can't onboard new clients at speed if every enrolment is manual.

The companies that scale make platform decisions that account for all six, not necessarily all at once, but with a clear sense of where they're heading.

The ones that struggle tend to fix whatever is most visibly broken without asking what caused it. They bump up the server. They hire another operations person. They switch platforms. Six months later, a different symptom of the same problem appears.

If any of this resonates - if you're turning down deals, losing clients, or making decisions based on what your platform allows rather than what your business needs - it's usually worth looking at the full picture rather than the loudest problem.

That's what our Blueprint process is for. A series of workshops where we work through your current situation, your growth goals, and the gap between them, and produce a plan for a platform that can support all of it. Not only what you need today, but what you'll need in three to five years. Learn more here.

If you'd like to start that conversation, get in touch.

Kaine Shutler is the founder and managing director of Plume, a UK-based agency specialising in custom learning technology. With 14 years of experience, Kaine has established expertise in Learning Management Systems, UI/UX design, and scalability, working with clients including Google and training businesses across multiple sectors.

6 Pillars of LMS Scalability

Words by

Kaine Shutler

TL;DR

Key takeaways

Scalability isn't just a technical problem. Infrastructure is one of six dimensions where growth either works or it doesn't — and it's rarely the most important one.

The costs that hurt most don't appear on any invoice. Missed deals, features you couldn't build, clients who left for a competitor with a better product — these show up in slower growth, not on a P&L.

Every migration is a reset. The training companies that scale without major disruption tend to have built something they never had to move away from.

Most training companies don't think seriously about scalability until they're already in trouble. A big client pushes them to onboard five hundred learners in a week. A competitor launches something they can't replicate. A platform they've built the business on starts falling apart under the weight of their own growth.

By that point, the options are narrower than they should be and the decisions are more expensive.

We work with training companies at various stages of growth, and the pattern is consistent. The businesses that scale without major disruption tend to have made better platform decisions earlier, ones that accounted for what the business might need to do in two or three years, not just what it needed to do that month.

Scalability gets talked about mostly in technical terms. Server capacity. Concurrent users. Load times. These things matter, but they're only part of it. A platform can handle a million users and still be the thing that stops the business from growing, because infrastructure is just one of the dimensions where scale either works or it doesn't.

We think there are six. Here's how we look at each of them.

1. Infrastructure

When people talk about LMS infrastructure, they usually mean servers. That's a narrow definition - infrastructure is really everything a learner touches, which means the problem starts much earlier than most people assume.

Think about what happens before someone logs in for the first time. They read about your training, decide they want it, and click through to buy. If the checkout page feels like it belongs to a different company, something breaks in that moment. Not technically, but in terms of trust. Then they land in the LMS and it looks like a third company. By the time they actually start learning, the product has already undermined their confidence in it.

Most training companies think of this as a branding problem. It isn't. It's an infrastructure problem, because it's structural. It's what happens when a product is built in pieces rather than designed as a whole.

The same thing happens inside the platform. Many training products are really five or six tools held loosely together. The LMS is here. The community is somewhere else. Assessments live in a third place. Surveys go out through a fourth. Each requires a different login, has a different interface, follows different logic. Learners don't articulate this as a problem. They just find the product confusing and effortful, and gradually engage with it less. You see it in completion rates. You see it in renewals. You rarely see it in feedback, because nobody writes in to say the experience felt fragmented.

Then there's the technical layer, which is where most people start this conversation.

Most platform decisions are made to solve the problem in front of you at the time. The cheapest option that works. The fastest to launch. The one your developer already knows. These are reasonable decisions under the constraints that existed when you made them. The problem is that the constraints change as the business grows, and the platform doesn't change with them. What worked at two hundred learners starts to show strain at two thousand. What worked at two thousand becomes the thing holding you back at twenty thousand.

A platform that handles five hundred learners comfortably can behave very differently with five thousand. When that happens, the instinct is to find a workaround rather than fix the underlying problem. License another tool to fill the gap. Build a manual process around the limitation. Each workaround adds complexity and cost without moving the ceiling.

The companies that handle this well don't wait until the current platform is failing to think about what comes next. They start that conversation while things are still working, which means they have the time and the headroom to make good decisions rather than urgent ones. A migration planned over six months looks completely different to one that has to happen in six weeks because the system fell over.

When that conversation happens early enough, the solution is usually more straightforward than people expect. Design the ecosystem as a whole rather than assembling it in pieces. Single sign-on across every product. A consistent experience from the first marketing touchpoint through to the last lesson. A technical foundation chosen for where the business is going, not where it is today. And critically, architecture that doesn't lock you in, because the decisions you make at the start should expand your options over time, not reduce them.

2. Workflow efficiency

Infrastructure failure is visible. Workflow failure tends to become invisible, because the manual workarounds people put in place eventually just become the job. Nobody questions why they spend every Monday processing a spreadsheet of new starters. It's just Monday.

It usually starts with one person whose job involves a lot of manual tasks that should be automated. Watching for purchase notifications and manually creating user accounts. Enrolling learners. Compiling monthly reports for B2B clients by pulling data from the LMS and building spreadsheets by hand. Managing requests that pile up in a support inbox.

At a hundred users, this is manageable. At a thousand, you hire someone to help. At ten thousand, you have a team, and the team is the bottleneck.

The goal isn't to remove people from the business. It's to make sure people are doing work that requires human judgement, not work that a well-configured system should be handling automatically.

Take new cohort onboarding. A client emails a spreadsheet of thirty new starters. Someone on your team processes it, creates the accounts, assigns the learning paths, and sends the welcome emails. Multiply that by every client, every month, and it becomes a significant drain on time that could be spent elsewhere. The better model is giving clients a self-serve import tool, or better still, an integration with their HR system that handles new starters automatically the moment they're added. The client doesn't wait. Your team doesn't intervene. It just happens.

The same applies to engagement. The most common mistake after companies start surfacing engagement data is routing it to a human and expecting them to act on it. When a learner isn't engaging, notifying their manager shouldn't be the end of the workflow. When you ask a manager to review a report and chase up a learner, you're adding workload and friction. You're also giving them the option not to bother. The system should be doing everything it reasonably can to keep learners engaged and progressing without human intervention. Reminders. Course recommendations. Re-enrolments. Nudges at the right moment in the right format. Every time a human has to intervene to keep a learner on track, you've introduced a step that may or may not happen.

Certifications expire. Someone is usually responsible for tracking who's due, chasing them, re-enrolling them, and updating their record when they complete. At scale it becomes a compliance risk, because the process depends on someone remembering to do it and then doing it without error. The whole thing should run automatically: expiry triggers a re-enrolment, a reminder sequence fires, and the record updates on completion without anyone touching it.

For reporting, the tools baked into most LMSs were built for a generic business; not yours. They surface raw data and leave you to interpret it. B2B training companies need to present that data in a way that demonstrates value to their clients. Which learners have completed which modules. Which clients are at compliance risk. Where engagement is dropping off. The companies that handle this well build reporting their clients can log into and use themselves. A live view of the information that matters, available without anyone having to produce it.

And if your B2B clients contact you every time they need to add a new starter or remove a leaver, that's friction for them and an administrative burden for you. Give clients the ability to manage their own users and both problems go away.

These are the most visible examples, but the principle runs through everything. Role changes that should trigger a new learning path but don't, because nobody told the LMS. Failed assessments that sit unaddressed because there's no automated follow-up. Each of these is a place where the system is asking a human to do something a system should do, and each one compounds as the business grows.

3. Cost

The cost conversation in LMS decisions almost always starts with the wrong number. The monthly fee, or the per-user rate. These are visible and easy to compare. What's harder to see is what the cost structure looks like at five times your current scale.

Per-user SaaS pricing sounds reasonable until the numbers get large. At $5 per user per month, 500 learners cost $30,000 a year. Add a 10,000-learner enterprise client and the same pricing model costs $600,000. You can pass that cost on, but now you're more expensive than a competitor who doesn't carry it. You can absorb it, but your margins take the hit.

And this is before accounting for price rises that have nothing to do with your growth. SaaS pricing across the industry rose by an average of 11.4 percent in 2025. But even without price rises, SaaS models are designed to cost more as you grow. Per-user pricing is the most visible version of that, but unlimited plans have their own version — features locked behind higher tiers, API limits that become relevant at scale, storage caps, support that costs extra when you actually need it. There's always something.

Nobody factors migration into the original platform decision. They should. Moving from one system to another takes months of internal time, costs money to execute, and disrupts clients in the process. When it goes wrong — data that doesn't transfer cleanly, learners who lose access, integrations that break — clients leave. And if you're on the wrong platform, you'll go through this more than once. Each time you outgrow a SaaS and move to the next one, the clock resets. When you build something designed to scale with the business, you move once.

There are also costs that never show up anywhere obvious. If your platform lacks features or integrations, you hire people to compensate. Extra support staff because the platform can't handle self-serve. Operations people because enrolments are manual. Account managers because reporting has to be compiled by hand. These show up in the headcount but the root cause is the technology, and the connection rarely gets made.

Worth saying plainly: custom platforms aren't without cost. Development is an investment, not a purchase, and maintenance is an ongoing expense. But the cost structure is predictable and doesn't change when your vendor gets acquired or decides to reprice. Moving from 1,000 learners to 10,000 increases server load modestly. Fees stay flat, for the most part.

The numbers over five years tell the story. A SaaS platform supporting ten thousand users commonly reaches a total cost of around $1.6m when you factor in per-user fees, price rises, and the personnel needed to work around its limitations. A custom platform with predictable hosting and maintenance tends to land around $250k over the same period. The crossover usually happens by year two or three.

Calculate the cost of SaaS versus custom using this free pricing calculator

Hardest to quantify is the cost you can't see at all. An idea your platform couldn't support, so you never built it. A feature your competitor launched that you couldn't match, so some clients left. A deal you priced yourself out of because your costs made you uncompetitive. None of these appear on a P&L. You just notice, eventually, that growth is slower than it should be.

4. Data

Most training companies are sitting on learner data they can't use. Not because it doesn't exist, but because nobody has built the systems to surface it in a way that actually informs decisions.

When you have fifty learners, you notice things. You can tell who's struggling, which content isn't landing, which clients are going quiet. When you have fifty thousand, you can't notice anything without systems that do it for you. Data is what makes a large learner base manageable rather than opaque. Without it, you're making product and retention decisions at scale based on instinct.

The questions most platforms let you answer are the obvious ones. How many people finished the course. How many logged in this week. These are fine as far as they go, but the more useful questions are specific to how your business operates and what success actually looks like for you. Which content causes people to drop off. Which learning paths correlate with better outcomes three months later. Which clients are quietly disengaging before they pick up the phone to cancel. Generic platforms can't answer these because they weren't built with your definition of success in mind.

For B2B training companies this is also a retention argument. Keeping clients depends on being able to show them the value they're getting. A spreadsheet of completion rates doesn't do that. Reporting that shows compliance status across their organisation, identifies learners at risk, and connects training activity to outcomes they actually care about - that's something worth renewing a contract for.

5. Agility

Agility is the ability to move when something changes outside your control. A competitor launches a feature your clients are asking about. A regulation comes into effect. A prospective client has specific technical demands your current platform can't meet.

The failure state isn't dramatic. You don't lose everything at once. You start losing deals you would previously have won. Churn creeps up because a competitor's product looks fresher. Your team starts saying "we can't do that" more often than they say "we can."

Regulatory compliance is where deals start to disappear before they're even properly lost. Data residency is the most common example. Many enterprise clients, governments, and public sector organisations require learner data to be stored and processed in their country. If your platform can't accommodate that, the deal is over before it started. HIPAA is a more acute version of the same problem. There are very few genuinely HIPAA-compliant LMSs, and the ones that are tend to have built compliance in at the expense of everything else. Healthcare organisations that want a modern, engaging learning experience and HIPAA compliance usually find they can't get both from an off-the-shelf platform.

Agility also determines how meaningfully you can differentiate through user experience. Most training platforms offer broadly the same thing: video lessons, quizzes, a certificate at the end. When your platform gives you the freedom to build beyond that, more interactive content, personalised learning paths, a genuinely better interface, you're offering something your competitors can't easily replicate. Better experiences produce better completion rates. Better outcomes justify higher fees. Clients who get real value from the training renew. All of this compounds over time in ways that show up in the numbers.

The companies that stay agile tend to have made one decision well early on: they kept control of the platform. When the business needed to change direction, the technology could follow.

6. Expansion

Where agility is reactive, expansion is deliberate. Choosing to enter new territory, a new market, a new type of customer, a new commercial model, and needing the platform to support that move.

Most training companies start with one product, one audience, one geography. That's often the right way to start but a business that's still selling in only one way to only one type of customer is more fragile than it looks. Each successful expansion reduces that dependency risk. A company that also sells B2B, operates in multiple geographies, and white-labels to partners isn't just bigger , but is more resilient. That's a different kind of scalability. Not just more users, but a more durable business.

The most common version we see is training companies moving into the enterprise market. Larger contracts, longer relationships, more predictable revenue. It's often where the real growth is. But selling to businesses rather than individuals changes what the platform needs to do significantly. You need multi-tenancy, the ability to give each client their own environment with their own users, branding, and reporting. You need seat management tools your clients can use themselves. You need compliance tracking at an organisational level, not just an individual one. None of this is exotic, but it requires a platform that can be shaped around a new commercial model rather than one that locks you into the model it was designed for.

Geographical expansion introduces a different set of demands. Data residency requirements that vary by country. Payment processors that work in the markets you're entering. Mobile-first design for markets where most learners are on a phone rather than a desktop. Each new market has its own version of this list.

White-labelling is another common expansion path, offering your platform to other businesses under their brand, as a product rather than a service. We've built this for several clients who serve different industry verticals under different brands. Each one looks and feels entirely distinct but runs on shared infrastructure, which makes the economics of maintaining multiple products considerably more manageable.

The common thread is that expansion requires the platform to do something it wasn't originally designed to do. When it can't, the only option is to migrate to one that can. And migrations are expensive, disruptive, and time-consuming in ways that always get underestimated. Data to transfer. Integrations to rebuild. Clients to manage through the disruption. The business effectively pauses while it moves house, and some clients won't wait. Every migration is a reset, months of work to get back to where you were, before you can move forward again.

When the platform can support it, expansion compounds. The infrastructure you built to serve enterprise clients also supports white-labelling. The multi-tenancy you developed for one vertical works for the next. Each move builds on the last rather than requiring a rebuild from scratch.

These pillars don't exist in isolation

They interact in ways that aren't always obvious until something goes wrong. A data problem limits your agility because you can't move quickly without knowing where the issues are. An infrastructure problem creates a cost problem because you end up spending on maintenance and headcount that better early decisions would have avoided. A workflow problem slows expansion because your team can't onboard new clients at speed if every enrolment is manual.

The companies that scale make platform decisions that account for all six, not necessarily all at once, but with a clear sense of where they're heading.

The ones that struggle tend to fix whatever is most visibly broken without asking what caused it. They bump up the server. They hire another operations person. They switch platforms. Six months later, a different symptom of the same problem appears.

If any of this resonates - if you're turning down deals, losing clients, or making decisions based on what your platform allows rather than what your business needs - it's usually worth looking at the full picture rather than the loudest problem.

That's what our Blueprint process is for. A series of workshops where we work through your current situation, your growth goals, and the gap between them, and produce a plan for a platform that can support all of it. Not only what you need today, but what you'll need in three to five years. Learn more here.

If you'd like to start that conversation, get in touch.

Kaine Shutler is the founder and managing director of Plume, a UK-based agency specialising in custom learning technology. With 14 years of experience, Kaine has established expertise in Learning Management Systems, UI/UX design, and scalability, working with clients including Google and training businesses across multiple sectors.

Plan your next learning platform with our founder

About Plume

As the leading custom LMS provider serving training businesses in the US, UK and Europe, we help businesses design, build and grow pioneering learning tech that unlocks limitless growth potential.

Plan your next learning platform with our founder

About Plume

As the leading custom LMS provider serving training businesses in the US, UK and Europe, we help businesses design, build and grow pioneering learning tech that unlocks limitless growth potential.

Plan your next learning platform with our founder

About Plume

As the leading custom LMS provider serving training businesses in the US, UK and Europe, we help businesses design, build and grow pioneering learning tech that unlocks limitless growth potential.